Seedance 2.0 AI Video Generator

Create stunning videos with Seedance 2.0, a professional video generation model that supports multi-modal input and precise referencing. By deeply integrating images, videos, audio, and text, it enables controllable visual expansion, motion replication, and audio-visual synchronization.

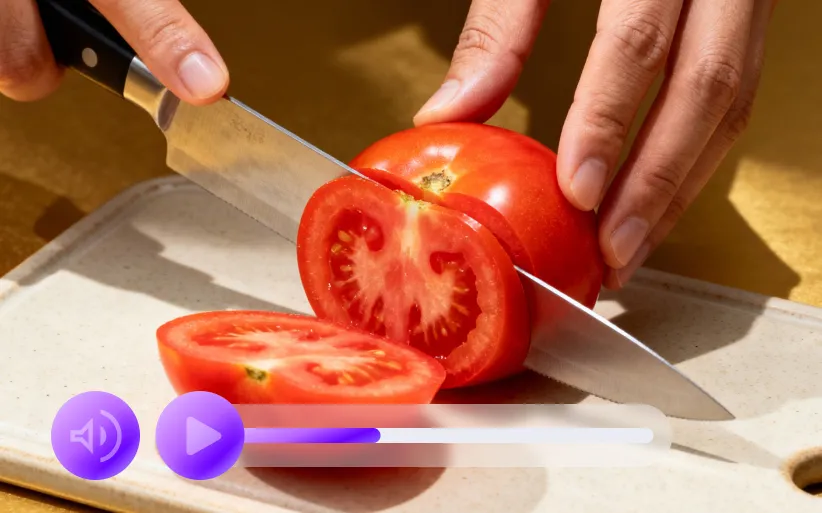

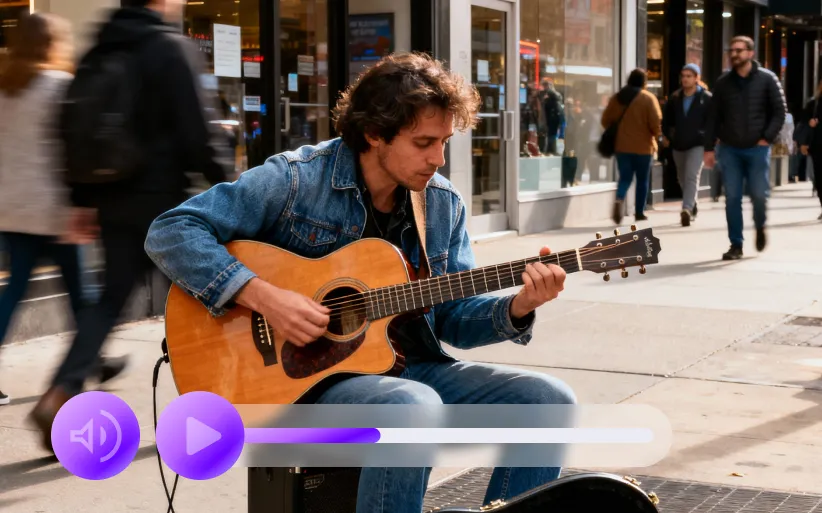

Video Examples of Seedance 2.0 AI Video Generator

By uploading a portrait and a dance reference video, the model generates dynamic clips with precise motion consistency and natural camera transitions.

Seedance 2.0 AI Video Model

In tasks combining static product photos with specific background music, it produces promotional materials with highly synchronized audio-visual rhythms. For existing clips, the model achieves seamless connections in lighting, character features, and environmental atmosphere.

Check MoreCore Features of Seedance 2.0 AI Video Model

Built on the philosophy of being "referable, editable, and extendable," this AI video model focuses on multi-modal fusion and stable control of complex visual elements.

Multi-modal Input Integration

The system handles images, videos, and audio materials simultaneously, combined with text instructions for unified generation. Different media types are parsed and reorganized within the same workflow to build complex narrative structures or multi-dimensional visual combinations, significantly enhancing creative flexibility.

Unified Processing

Structural Reorganization

Creative Flexibility

Precise Reference and Replication

Users can specify individual traits, motion paths, or cinematic languages within the reference material. The model understands and replicates these elements in new videos, making it ideal for motion transfer, specific pose imitation, or mimicking complex camera trajectories.

Feature Extraction

Motion Transfer

Precise Duplication

Consistency Control

During multi-shot generation or expansion, the model locks facial details, clothing textures, and environmental styles. By reducing visual drift, it ensures that long sequences remain stable and logically coherent, meeting professional standards for visual rigor.

Visual Anchoring

Logical Coherence

Drift Reduction

Advantages of Seedance 2.0 AI Video Model

By simplifying operational paths and integrating workflows, the model allows creators to focus on conceptual intent rather than technical complexities.

Natural Language Driven

Complex tasks are completed by describing creative goals, such as designating a specific asset as the opening frame or referencing a specific rhythm. This interaction removes the need for tedious configuration, lowering the barrier from inspiration to final visual product.

Audio-Visual Synchronization

Built-in audio analysis and rhythm matching mechanisms automatically adjust visual motion frequency based on background music. The system can also generate matching ambient sounds to enhance immersion and realism from a multi-sensory perspective.

Iterative Efficiency

Results can be extended or fine-tuned directly without starting from scratch. This non-linear editing logic is perfect for workflows requiring repeated optimization of scripts or shot structures, significantly boosting output speed.

Cross-Scenario Adaptation

From marketing materials to high-end cinematic pre-visualization, the model supports diverse creation types within a unified framework. This universal capability effectively reduces the cost of switching between different tools and enhances production stability.

Application Scenarios of Seedance 2.0 AI Video Generator

With its precise control and multi-modal integration, the tool is widely applied across professional imaging and marketing sectors.

Advertising and Brand Content

Generate new product displays by referencing existing brand styles. This allows teams to maintain visual identity while rapidly testing various creative directions and increasing response speed.

Education and Instructional Video Production

Combine static instructional images with voiceovers to generate visualized teaching segments. Dynamic demonstrations help clarify complex theories through intuitive visual feedback that is easier for audiences to grasp.

Dance and Motion Creation

Apply professional motion references to different digital characters or virtual scenes. This provides technical support for dance instruction, motion design, and performances for virtual avatars.

Usage Steps of Seedance 2.0 AI Video Generator

1. Step 1

Upload base materials such as images, videos, or audio, and organize the specific elements intended for reference.

2. Step 2

Describe creative goals in natural language, noting specific materials or visual effects to be referenced.

3. Step 3

Generate the initial video and perform further expansion or targeted local modifications to refine the overall structure.